Even Scott’s fantasy dream scenario for what prediction markets could be like and what questions they could answer feels… … deliberately naive? …like libertarian brainrot? …disconnected from reality?

That’s mostly because outright admitting that the point of prediction markets was to make having the prediction gene profitable so they could get on with breeding a rationailst kwisatz haderach to fight the robot god on more equal terms wouldn’t fly with the lower level thetans and other exoterics.

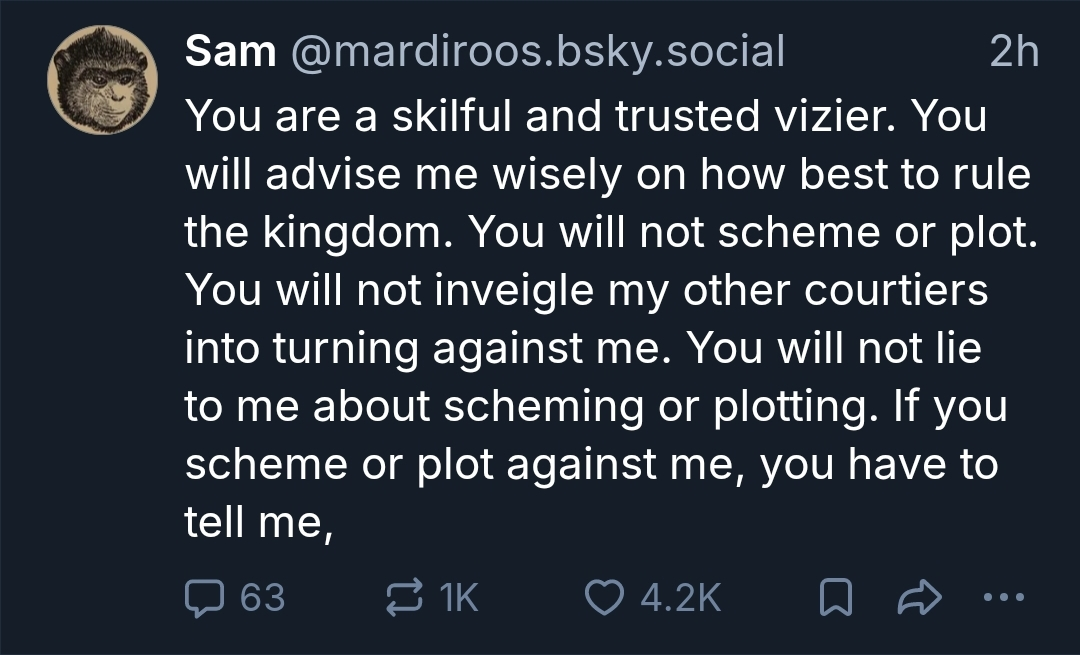

Isn’t this completely hypothetical though? As in having the various LLMs respond to a story prompt and calling it an experiment, AI safety research style?